AI Ethics & Risks: The Real Cost of Progress

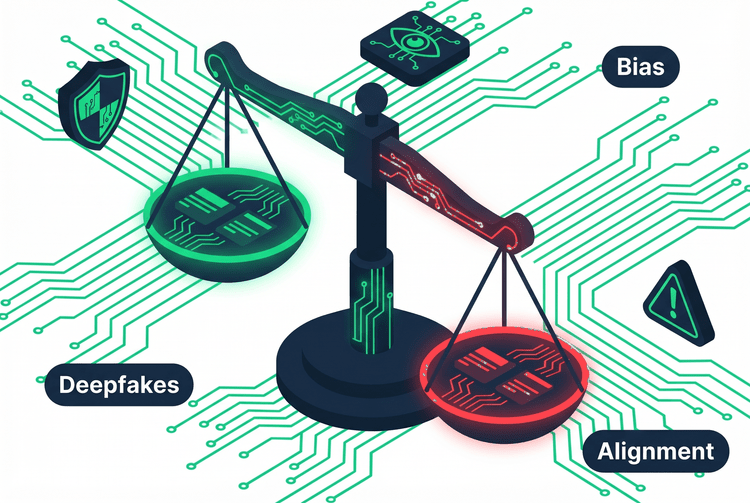

Bias, deepfakes, copyright, and the alignment problem. Explore the unintended consequences of AI and what it means to build, deploy, and use these tools responsibly.

What is AI Bias? Why AI Systems Can Be Unfair — and What We Can Do About It

AI bias isn't a glitch — it's a reflection of the data and decisions that go into building these systems. Here's what it means, why it matters, and how to spot it.

Read Article →Search AI Ethics & Risks

- AI Careers

- AI Concepts & Technology

- AI Ethics & Risks

- AI Fundamentals

- AI Industry & Ecosystem

- AI Literacy & Skills

- AI Tool Reviews & Comparisons

- AI by Profession

- AI for Everyday Life

- AI for Learning & Personal Development

- AI for Work

- AI in the Real World

- Advanced AI Applications

- Everyday AI

- Future of AI

- How to Learn AI

- Tool Guides

A practical guide for users and buyers on how to evaluate AI tools. Learn the key criteria, questions to ask vendors, and red flags to watch for before you buy.

Explore the global AI governance landscape, from the EU AI Act to the divergent approaches of the US and China, and the challenge of regulating a technology that outpaces law.

Explore the global AI governance landscape, from the EU AI Act to the divergent approaches of the US and China, and the challenge of regulating a technology that outpaces law.

Explore how AI is fueling a new geopolitical arms race, threatening democratic institutions with propaganda and disinformation, and reshaping global power dynamics.

Uncover the hidden environmental impact of artificial intelligence, from the massive energy and water consumption of data centers to the growing crisis of AI-driven e-waste.

Learn how AI is concentrating power in the hands of a few tech giants through data monopolies, the compute gap, and a talent flywheel, and what it means for the future.

Learn how AI is concentrating power in the hands of a few tech giants through data monopolies, the compute gap, and a talent flywheel, and what it means for the future.

Discover how AI is supercharging surveillance in both the physical and digital worlds, from facial recognition and smart cameras to data brokers and inferential tracking.

Discover how AI is supercharging surveillance in both the physical and digital worlds, from facial recognition and smart cameras to data brokers and inferential tracking.

An exploration of how AI is used in high-stakes decisions that shape lives, from hiring and policing to lending and healthcare. Learn the risks and what's at stake.

A deep dive into algorithmic bias and discrimination. Learn where bias comes from (data, models, humans) and how it leads to real-world harm in hiring, lending, and more.

Learn how small, individual AI harms can scale into major systemic risks through aggregation, feedback loops, and homogenization. A critical concept in AI ethics.

Discover the three main failure points of AI systems. This guide explains how bad data, flawed models, and poor deployment lead to real-world AI harms.

Explore the landscape of AI harms, from individual and group harms like bias and discrimination to societal risks like misinformation and erosion of trust. A plain-language guide.

GPT stands for Generative Pre-trained Transformer. Learn what each part of this powerful acronym means and why it’s the engine behind the current AI revolution.

AI surveillance is rapidly growing, offering benefits like enhanced security and crime prevention, but also raising significant concerns about privacy, freedom, and potential misuse.

As AI continues to evolve, it brings both immense opportunities and significant ethical challenges. This article explores the key ethical dilemmas, including accountability, privacy, and the potential for AI to either enhance or undermine human freedoms. It raises important questions about how we can control AI’s development to ensure it serves humanity responsibly.

AI has the power to create a world of limitless possibilities—or one of total control. Will AI lead us to a utopia where automation enhances our lives, solves global challenges, and unlocks human potential? Or are we heading toward a dystopia where AI surveillance, job displacement, and unchecked algorithms dictate our future? As AI continues to evolve, the path we take depends on the choices we make today. Let’s explore the possibilities, risks, and the critical decisions that will shape the AI-driven world of tomorrow.

AI is supposed to be objective, but bias can sneak in through the very data it learns from—shaping everything from hiring decisions to criminal justice outcomes. When AI models are trained on biased datasets, they can reinforce and even amplify existing inequalities.

Knowledge Is the Best Defense.

The more you understand about how AI works and where it can go wrong, the better equipped you are to use it responsibly. Explore all our learning paths.

Explore All Learning Paths →